I just came back from the Scheme and Functional Programming Workshop at Montréal, hosted by Marc Feeley and an excellent organisation team. It’s been a fun couple of days putting faces to emails and IRC nicks and attending a handful of pretty interesting talks. Here’s a quick report.

I just came back from the Scheme and Functional Programming Workshop at Montréal, hosted by Marc Feeley and an excellent organisation team. It’s been a fun couple of days putting faces to emails and IRC nicks and attending a handful of pretty interesting talks. Here’s a quick report.

My favourites were the two invited papers. The first day, Olin Shivers presented a pretty cool hack in a delicious talk consisting in actually writing the code he was explaining. Under the title Eager parsing and user interaction with call/cc, he showed us how call/cc is not just an academic toy, but can be put to good use in writing a self-correcting reader for s-expressions on top of the host scheme read (or any other parser, for that matter). He started from the very basics, explaining how input handling and buffering is usually delegated to the terminal driver, which offers a rather dumb, line-oriented service. Wouldn’t it be nice if, as soon as you typed an invalid character (say, a misplaced close paren) the reader complained, before waiting for the whole line to be submitted to read? Well, all we need to do is to implement the input driver in scheme, and he proceeded to show us how. As you know, reading s-expressions is a recursive task, meaning that when you detect invalid input in the middle of a partial s-expression, or want to delete a character, you might find yourself somewhere deep inside a stack of recursive calls and you’ll need to backtrack to a previous checkpoint. That’s an almost canonical use case for call/cc, provided you use it intelligently. Let me tell you that Olin is quite capable of using call/cc as it’s meant to be used, as he immediately demonstrated. I’m skipping the details in the hope that a paper will be available any time soon. As i mentioned, his talk was a beautiful example of live coding: he showed us the skeleton of the implementation and filled it up as he explained how it should work. Olin does know how to write good code, and it was a pleasure (and a lesson) seeing him doing just that. It was all so schemish: a terminal and emacs in the venerable twm: that’s all you need to create beauty.

The second invited talk was by Robby Findler, who gave us a tour of Racket’s contract system, how to use it and the subtleties of implementing it properly. The basic idea dates back to Meyer’s design by contract methodology of the early nineties, and was subsequently explored further by several authors, including Robby. Simple as they sound at first sight, good contracts are not trivial to implement. For instance, it’s vital to assign blame where’s blame is due, and Robby gave examples of how tricky that can get (and how Racket’s contracts do the right thing). Another subtlety arises when you try to write contracts assessing a property of an input data structure (say, you want to ensure that an argument is actually a binary search tree). The problem here is that checking the contract can alter the asymptotic complexity of the wrapped function (e.g., you can go from O(log n) to O(n) in a lookup, an exponential degradation). Racket provides an ingenious fix for that problem, by means of lazy contracts that are checked as the input is traversed by the “real” function.

There was also real-time scheme in Robby’s talk, although in a much more sophisticated way, thanks to Slideshow’s magic, which lets you embed code files and snippets in a presentation, evaluate them and show the results in the same or a new slide. Very elegant. He also used DrRacket a bit during the introductory part of his talk, and i’m starting to understand why some people are so happy with Racket’s IDE: it definitely felt, in his hands, professional and productive. And also kind of fun.

There were also lightning talks. I’m of course biased, but the one i enjoyed most was Andy Wingo’s Guile is OK!, where he showed us how Guile has overcome the problems, perceptual and real, of its first dozen years. For instance, he reminded us how Guile was traditionally a “defmacro scheme”, and he himself a “defmacro guy”… until he studied in earnest Dyvbig’s work and ported his syntax-case implementation to Guile, to become a “syntax-case man” as Guile gained full syntax-case support (i hope i’ll reach that nirvana some day; i still find syntax-case too complex and plagued by unintuitive corner cases (one of them was showed by Aaron Hsu in another lightning talk, where apparently none of us was able to correctly interpret 10 lines of scheme) that make me uneasy; but that’s surely just ignorance on my part). There are many other things that make Guile a respectable citizen of the Scheme Underground, which were also listed in Andy’s talk: i’ll ask him for a PDF, but in the meantime you can just try Guile and see :).

Although this time we didn’t have a talk by Will Clinger, to me it’s always a pleasure to listen to what he has to say, even if only as comments to other people’s talks. For instance, i enjoyed his introduction to Alex Shinn’s R7RS progress report. Will showed us three one dollar coins, of the same size and shape, but different, as he described, in almost everything else. And yet, all three were useful and recognised as (invalid) dollars by the Canadian vending machines at the entrance. He thinks that says something about standards, but he left to us to decide exactly what.

Finally, let me mention that this workshop has alleviated all my quibbles with what i’ve sometimes perceived as a fragmented, almost dysfunctional, community, made up of separate factions following their own path in relative isolation. My feeling during the workshop was nothing of the sort; rather, i’m back with the conviction that there’s much more uniting us that breaking us apart, and that there’s such a thing as a scheme underground ready to take over the world. Some day.

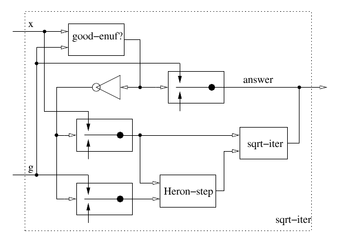

Finally, Gerry gave a lightning talk with yet another piece of food for thought. The rub of it was drawing our attention to the possibility of exploiting a posited parallelism between the theory and methods to solve differential equations on the one hand, and programs on the other. There’s a way of approaching solving a differential equation that is, if you will, algebraic in nature: one manipulates algebraically expressions to simplify and eventually obtain a closed form solution, or, if that’s not possible, creates numerical approximations to evolve the boundary conditions in the state space as a function of discrete time steps. You end up that way with something that works as a solution, but, most of the time, without a deep understanding of the traits that make it a solution: in the spirit of the robust design ideas sketched before, we should probably be asking for more qualitative information about how solutions behave as we change the boundary or initial conditions of our problem. As it happens, matematicians have a way of analyzing the behaviour of solutions to differential equations by studying their Poincaré

Finally, Gerry gave a lightning talk with yet another piece of food for thought. The rub of it was drawing our attention to the possibility of exploiting a posited parallelism between the theory and methods to solve differential equations on the one hand, and programs on the other. There’s a way of approaching solving a differential equation that is, if you will, algebraic in nature: one manipulates algebraically expressions to simplify and eventually obtain a closed form solution, or, if that’s not possible, creates numerical approximations to evolve the boundary conditions in the state space as a function of discrete time steps. You end up that way with something that works as a solution, but, most of the time, without a deep understanding of the traits that make it a solution: in the spirit of the robust design ideas sketched before, we should probably be asking for more qualitative information about how solutions behave as we change the boundary or initial conditions of our problem. As it happens, matematicians have a way of analyzing the behaviour of solutions to differential equations by studying their Poincaré